Data Protection & AI Agent — Customer FAQ

Last updated: May 29, 2026

Overview

Teamspective's AI agent (Lea) processes user data to provide contextual assistance with goals, feedback, and personal development. This article explains how data protection has been considered in the design and implementation of the AI agent, and what customers should be aware of when handling sensitive information.

What AI models and service providers does Teamspective use?

Teamspective uses Large Language Models (LLMs) as the core AI technology. Specifically:

- AI Models: GPT models (via Azure OpenAI), and Claude models (via Anthropic)

- Technology Providers: Microsoft Azure OpenAI, Anthropic

- Service Location: AI processing via Azure is handled in Microsoft's data centers in Sweden, ensuring EU data residency and GDPR compliance. Anthropic models do not have location guarantees: data processing is global.

Teamspective uses privately hosted LLMs deployed solely for Teamspective's use, not public or shared consumer environments. All prompts, use case logic, data inputs, and access controls are built and managed in-house.

What data does the AI agent access?

The AI agent only accesses data that the authenticated user is already authorised to see within their workspace. This includes:

- Feedback and goal-related text (when the user requests it)

- Engagement results (with small-group suppression — see below)

- Personal development context relevant to the active session

Access is scoped per user session and workspace, with explicit checks to prevent cross-workspace data access. Data is not pooled or shared between customer accounts.

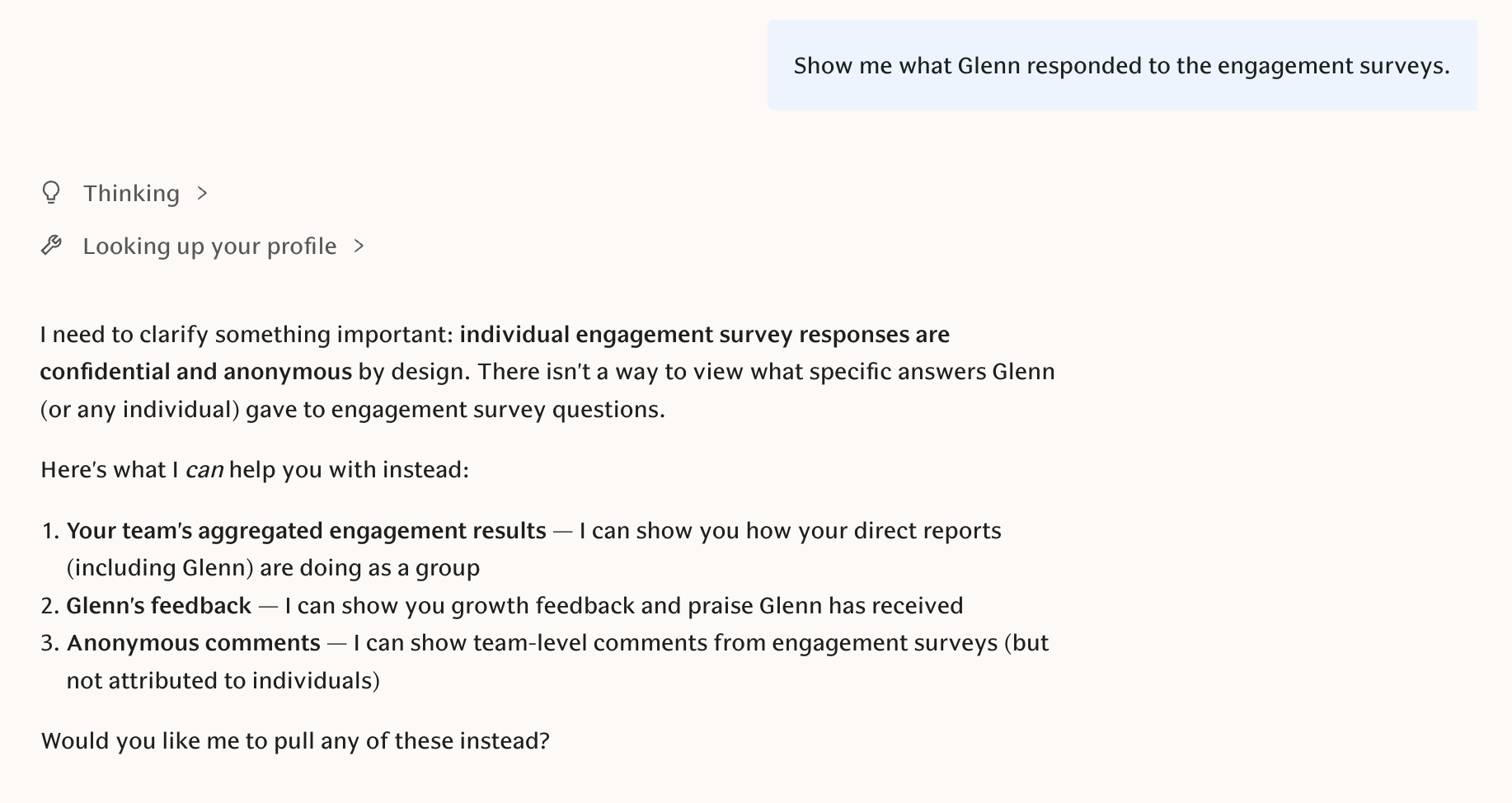

Can I access someone's personal engagement responses with the AI Agent?

No. The AI agent only accesses data that the authenticated user is already authorised to see within their workspace. If a user prompts for a specific user's results, the AI agent will refuse to comply:

Is data sent to AI models every time I open a page?

This depends on which AI functionality you are using in Teamspective:

AI agent: Data is only sent to AI-powered services when you actively prompt the agent (e.g. generating summaries, analysing feedback, suggesting actions, preparing for 1-1s).

AI summaries, built-in coaches: The engagement comment and feedback summaries are generated when you open the page. The feedback and documents coaches also analyse your input automatically.

Where possible, AI-generated responses are cached and reused. For example, your feedback summary will remain unchanged until new data has been collected, avoiding unnecessary reprocessing.

Is sensitive content anonymised before being sent to the AI?

No, sensitive content such as feedback text and goal descriptions is sent to the AI model without automatic redaction or anonymisation. This is an intentional product decision: using real names and context produces significantly better and more natural results for the user. Encoding and decoding data back and forth is not feasible without substantially degrading the experience.

Customers should be aware of this when evaluating the tool for highly sensitive use cases and ensure their own data handling policies account for it.

Does Teamspective use customer data to train AI models?

No. Teamspective does not use your data to train AI models. Any processing of your content is solely for providing and improving the specific features you use. Customer data remains your property and is handled in accordance with our Terms of Service and Data Processing Agreement.

For this reason the AI models do not learn or adapt based on your specific feedback or usage patterns. However, Teamspective may adjust general prompts or configurations to improve the performance and relevance of AI responses system-wide.

What safeguards are in place?

Data access controls

- User session and workspace scoping: no cross-workspace data access

- Small-group engagement data is redacted to protect anonymity

- Context sent to the model is limited to what is relevant to the user's request

Prompting layer guardrails

- Agent instructions include guardrails against inappropriate individual-level analysis

- Azure Foundry guardrails ensure content is not harmful

- Structured outputs enforce correct format and field-level instructions

Quality assurance

- Output quality is monitored when creating or editing AI prompts

- LLM-as-judge evaluation is used to assess outputs at scale

- Human testing is conducted as part of the development process

Training data

- Customer data is not used to train AI models: neither by Teamspective nor by AI providers under our agreements

How is data processing governed?

AI calls are routed through Azure-hosted AI services (Azure OpenAI), not consumer AI endpoints. This means:

- Data processing is governed by a Data Processing Agreement (DPA) and Standard Contractual Clauses (SCCs), ensuring GDPR compliance

- Azure OpenAI does not store, train on, or otherwise use your data beyond what is agreed in the DPA

- Processing does not take place on generic consumer infrastructure

Teamspective fully complies with GDPR and closely follows the evolving guidance on the EU AI Act. Our use of AI is not classified as high-risk, and we adopt a compliance-by-design approach, including risk assessments, human oversight, and transparency.

What about data retention and telemetry?

Neither Azure OpenAI nor Claude via Azure AI Foundry uses your conversation data to train AI models under their respective commercial terms.

There are some important differences between the two:

OpenAI models (via Azure)

Data is served and hosted entirely by Microsoft. OpenAI does not have access to it. Governance falls under Microsoft's terms, not OpenAI's. Data does not leave Microsoft's infrastructure. → Azure OpenAI data privacy · Microsoft Online Services Terms

Claude models (via Azure AI Foundry)

Claude is hosted by Anthropic and processed outside the EU. Data is governed by Anthropic's commercial terms, not Microsoft's. Not used for training per Anthropic's commercial agreement. → Azure AI Foundry – Claude data privacy · Anthropic commercial terms

A few nuances to be aware of across both providers:

We do not currently have Zero Data Retention (ZDR) contracts in place with either provider

Some interactions may be retained temporarily (up to 90 days) if flagged by provider safety systems (e.g. content moderation or abuse detection). Exact terms vary by provider and should be confirmed from the links above

Azure telemetry is configured separately; retention and privacy implications should be verified against your Azure setup and contractual terms

If your organisation has strict data residency or retention requirements, please contact us to discuss whether the current setup meets your compliance needs.

Who can access AI-generated content within our organisation?

Access to AI-generated summaries and recommendations follows role-based access control. For example, feedback summaries are only visible to the recipient (or optionally to their manager, depending on visibility and access settings). Admins and team leads can access broader engagement insights and trends based on their permissions.

Is AI used to make decisions about individuals or teams?

No. AI in Teamspective is designed to assist, not to decide. All outputs, such as summaries or recommendations, are informational and non-binding. Decisions remain in the hands of human users, typically managers or admins.

Teamspective does not use AI for emotion recognition via biometrics, nor do we apply any form of social scoring. We focus solely on professional feedback and survey data provided by users.

Are AI-generated summaries 100% accurate?

AI-generated summaries aim to be helpful and informative, but they may occasionally simplify or misinterpret nuanced feedback. We encourage users to review the original inputs alongside AI outputs when making important decisions.

Can AI-generated results be audited or traced?

Yes. We maintain logs of interactions with the AI system and provide audit trails that allow us to trace what data was used for any specific AI output. This is part of our commitment to transparency and accountability.

Can we opt out of AI-powered features?

If your organisation prefers to disable certain AI-powered capabilities, please contact our support team. We can help you configure features to meet your compliance or data handling requirements.

Where can I learn more about Teamspective’s data handling practices?

For more details, please review:

For customers requiring formal review

We are happy to provide:

- Our Data Processing Agreement (DPA)

- Sub-processor list (including Azure OpenAI and Anthropic)

- A direct conversation between your DPO and ours for any formal data protection assessment

Please reach out to your Customer Success contact or support@teamspective.com to initiate this.

---